Table of contents

Preamble

There is not that much to say about this feature that will never ever replace a workload manager at OS level like PRM on HPUX. It has anyway the added value to be more than simple to setup but as usual, like St Thomas, you can really trust it once you have seen it working.

The aim is simply to CPU limit your instances to the value of initialization parameter CPU_COUNT, not more not less.

Instance caging testing

This test has been done with Oracle 11.2.0.2.0 on a Linux box (Red Hat Enterprise Linux Server release 5.4 (Tikanga)) with a Xeon processor (1 socket, 4 cores).

First let’s create a small procedure to eat CPU. The CPU must be eaten at database level and not by a shell script at OS level, unless it would not work:

CREATE OR REPLACE PROCEDURE eat_cpu(iteration NUMBER) AS i NUMBER; x NUMBER; y NUMBER; BEGIN i:=1; x:=0.7; LOOP EXIT WHEN i>iteration; y:=SIN(x); y:=COS(x); y:=TAN(x); x:=y; i:=i+1; END LOOP; END; / |

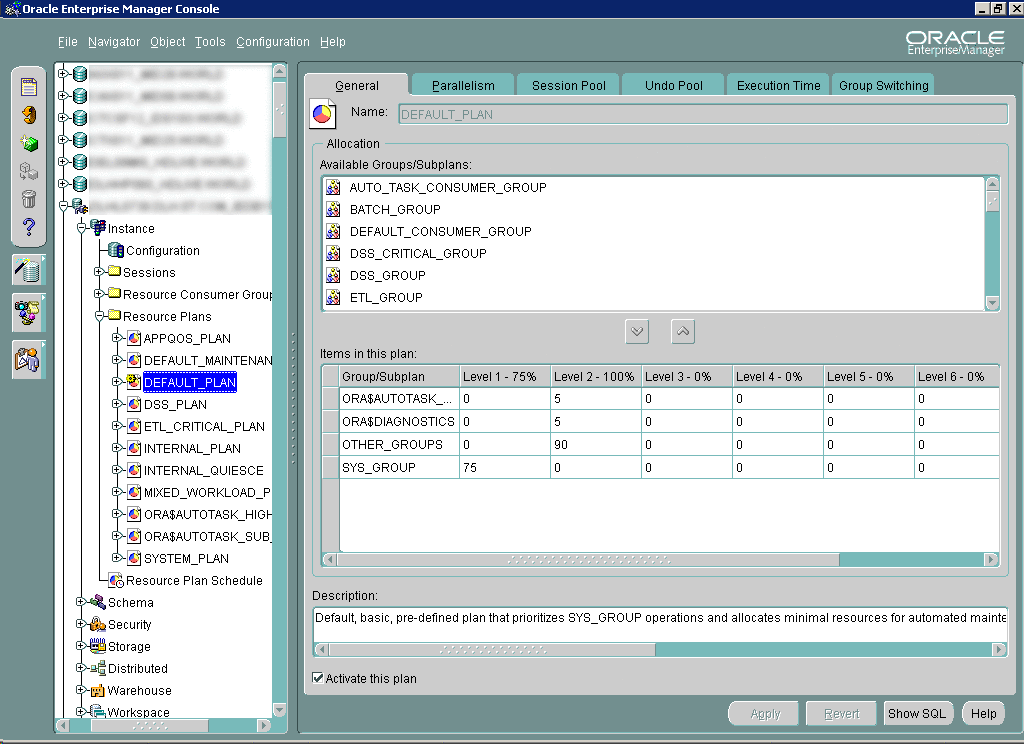

Then you need to activate a resource plan, you can choose the DEFAULT_PLAN which simply favor SYS sessions. You can do it with the graphical interface or simply with:

SQL> ALTER SYSTEM SET resource_manager_plan='DEFAULT_PLAN'; SYSTEM altered. |

Set the parameter CPU_COUNT to 1, it will be simpler to use 1 core at 100%. Then issue in multiple session (4) the eat_cpu procedure (shoud take a while to complete and let you time to observe):

SQL> EXEC eat_cpu(9999999); PL/SQL PROCEDURE successfully completed. |

Then by using top Linux command you should see something like. In our example each session uses around 25% of a core:

But again I don’t think it’s reliable in production environment:

- Granularity is the core, what if more instances than core ?

- DBA will not play setting CPU_COUNT manually time to time and unused CPU power cannot be shared between instances…

References

- Database Instance Caging: A Simple Approach to Server Consolidation